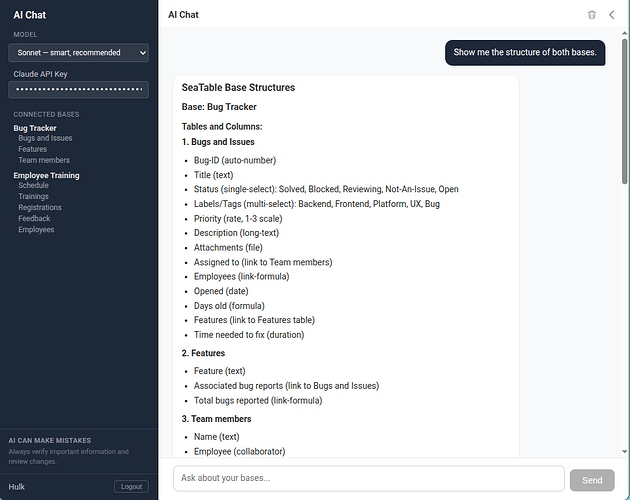

We already offer AI-powered automations with a self-hosted LLM. Now we’ve built something new: an AI Chat that lets you interact with your SeaTable data through natural conversation. You can ask questions, analyze data, create rows, and more.

Here are a few impressions:

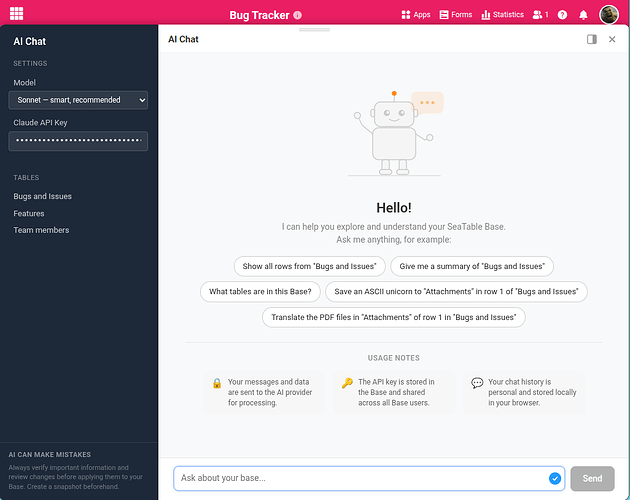

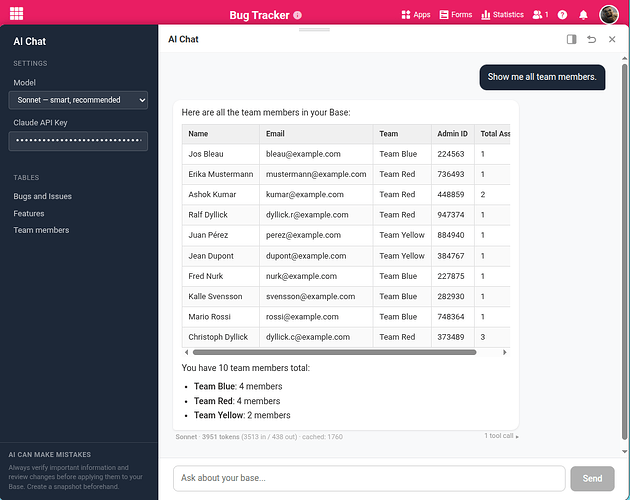

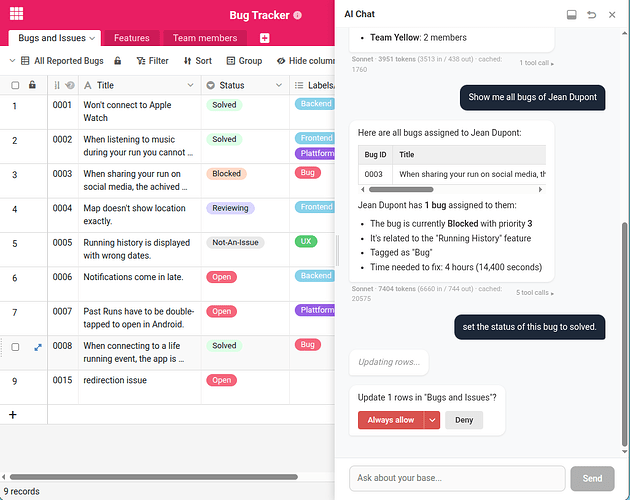

AI Chat Plugin

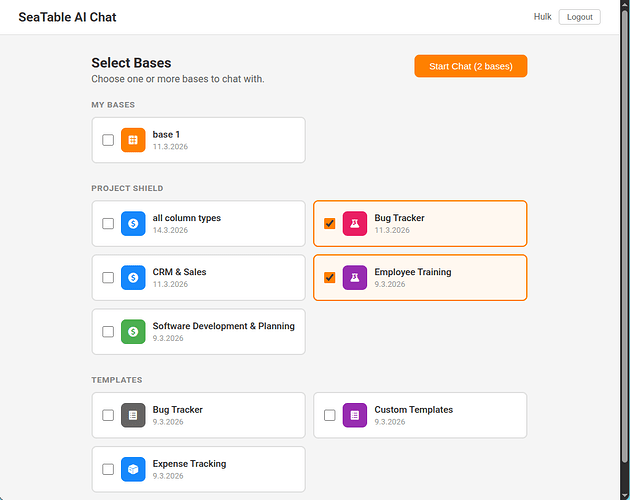

AI Chat Portal

Before we finalize the direction, we’d love your input on three decisions.

1) How should the AI Chat be integrated?

We’ve prototyped two different approaches:

- As a plugin: You open the AI Chat inside a base. You see your tables and data alongside the chat, which gives you full context. The trade-off: you interact with one base at a time.

- As a standalone chat: A separate interface where you can work with multiple bases in one conversation. The trade-off: you don’t see your data directly while chatting.

We’re leaning towards the plugin approach as the first release, since most interactions are about the data you’re currently looking at. Cross-base analysis could follow later.

What do you think? Is working within a single base with full visibility the right starting point? Or is cross-base interaction something you’d need from day one?

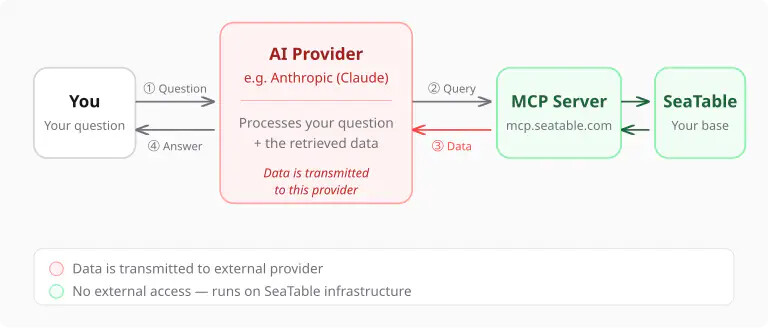

2) How should AI model access work?

The AI Chat requires a significantly more capable model than what we use for AI automations today. It’s not realistic for us to host a model at this level in the short term.

That’s why the current approach is Bring Your Own Key (BYOK): you connect your own API key from Anthropic or OpenAI, choose your preferred model, and pay the AI provider directly. This is an established pattern used by many AI-powered tools and has real advantages for you:

- Model choice: Use the model that works best for your needs

- Cost transparency: You see exactly what you spend, no markup

- Always up to date: Access the latest models as soon as they’re available

This is different from our AI automations, where the model is included in your SeaTable subscription. We may offer a bundled option in the future, but for now BYOK gives you access to the best available models without waiting for us to catch up.

How do you feel about this? Is bringing your own API key acceptable, or would that be a dealbreaker for you?

3) Ship it as beta in v6.1?

SeaTable 6.1 is right around the corner. We could include the AI Chat plugin as a public beta in this release — giving you early access while we continue to refine it based on your feedback.

Would you like to see it in v6.1, even if it’s not fully polished yet? Or would you prefer we wait until it’s more mature?

Best regards

Christoph